It was 9:06 a.m.

Coffee in hand. Laptop open. The dashboard loaded like it always did.

TikTok Ads Manager showed conversions from last night.

GA4 didn’t.

Spend looked normal. Traffic looked healthy. Revenue existed in the CRM. Yet GA4 was telling a quieter story…. fewer conversions, fewer attributed sessions, weaker performance.

Nothing was broken. Nothing was zero. But nothing lined up.

That was the morning I stopped trusting the dashboard. Not because the data was wrong but because I realised the story it was telling was incomplete.

At first glance, everything looked “almost right”. And that’s the most dangerous state data can be in.

TikTok showed strong performance. GA4 showed fewer conversions. Attribution windows didn’t align. Timestamps didn’t match. Each platform was technically working, yet none of them agreed.

This wasn’t a tracking outage. It was worse.

It was the kind of gap that doesn’t trigger alerts, but quietly erodes confidence.

The questions came quickly.

- Which number was correct?

- Was TikTok overreporting?

- Did GA4 miss conversions?

- What would I say in the stakeholder meeting later that morning?

The pressure wasn’t about performance. It was about credibility.

I stopped staring at charts and started tracing the path. Not which tool was wrong, but when each tool was observing the user.

That’s when it became clear: GA4 and TikTok weren’t disagreeing. They were watching the same journey from different moments in time.

At this point, the conversation needs to move out of emotion and into architecture.

GA4 and media platforms like TikTok are not designed to answer the same questions. Expecting parity between them is a category error. Each system observes user behaviour through a different lens, with different timing, incentives, and constraints.

What appears as a “data gap” is often a measurement sequencing issue, not a tracking failure.

From a professional standpoint, resolving this requires reframing the problem.

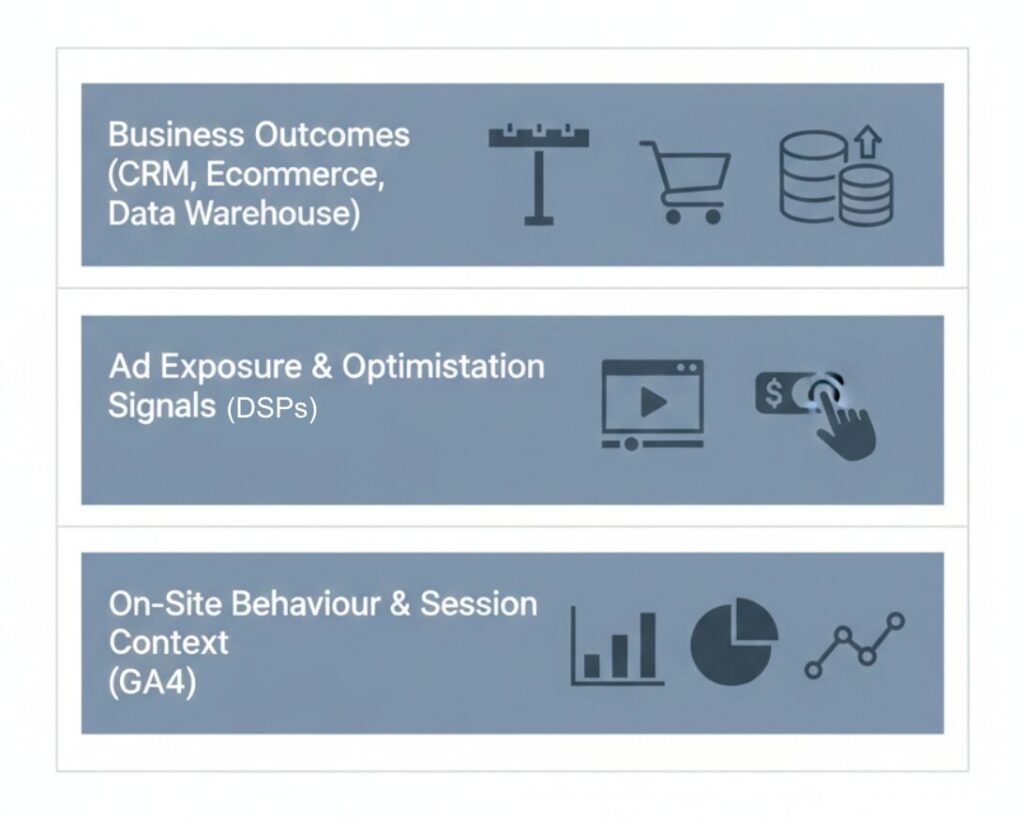

1. Establish Clear Measurement Roles

The first step is governance, not debugging.

GA4 should be treated as the system of record for on-site behaviour and session context. Media platforms such as TikTok should be treated as systems optimised for ad exposure and optimisation signals. Downstream systems i.e., CRM, Ecommerce platforms, data warehouses should be the source of truth for business outcomes.

When a single platform is expected to answer all questions, discrepancies are inevitable.

Clear ownership of questions prevents unnecessary reconciliation work.

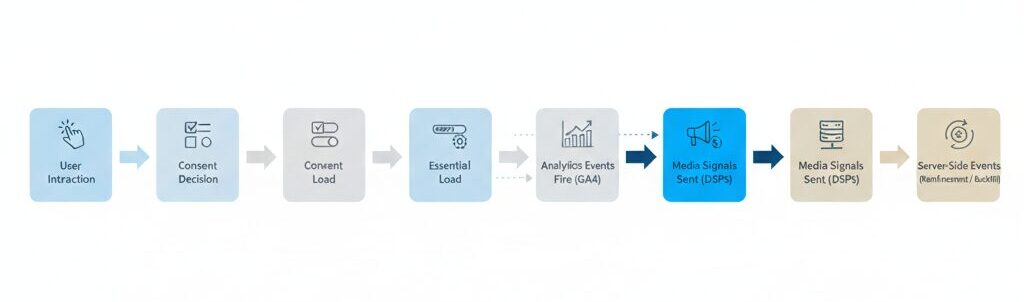

2. Design and Document Event Sequencing

Most teams focus on what events fire. Fewer teams focus on when they fire.

In a compliant, modern setup, the sequence typically looks like this:

User interaction occurs first. Consent decisions follow. Essential scripts load. Analytics events fire once consent allows. Media signals are sent. Server-side events are used as reinforcement or backfill, not as a replacement for clarity.

If GA4 events are delayed due to consent, but TikTok receives server-side events immediately, divergence is expected. This is not an error state, it is an architectural outcome.

Professionally managed teams document this sequence and socialise it internally so stakeholders understand why timing differences exist.

For a practical walkthrough of how TikTok’s Events API works alongside GTM in modern setups, this video provides a clear 2026-ready perspective on conversion tracking.

3. Align Conversion Definitions, Not Event Names

A shared event name does not guarantee a shared meaning.

A “purchase” in GA4 may represent a completed transaction confirmed client-side. A “conversion” in TikTok may represent an inferred outcome tied to ad exposure, including view-through logic.

Expert teams explicitly define:

- When the conversion is recorded

- From which layer (client, server, or platform)

- With which identifiers

- For what analytical purpose

Alignment at the definition level prevents false debates about accuracy.

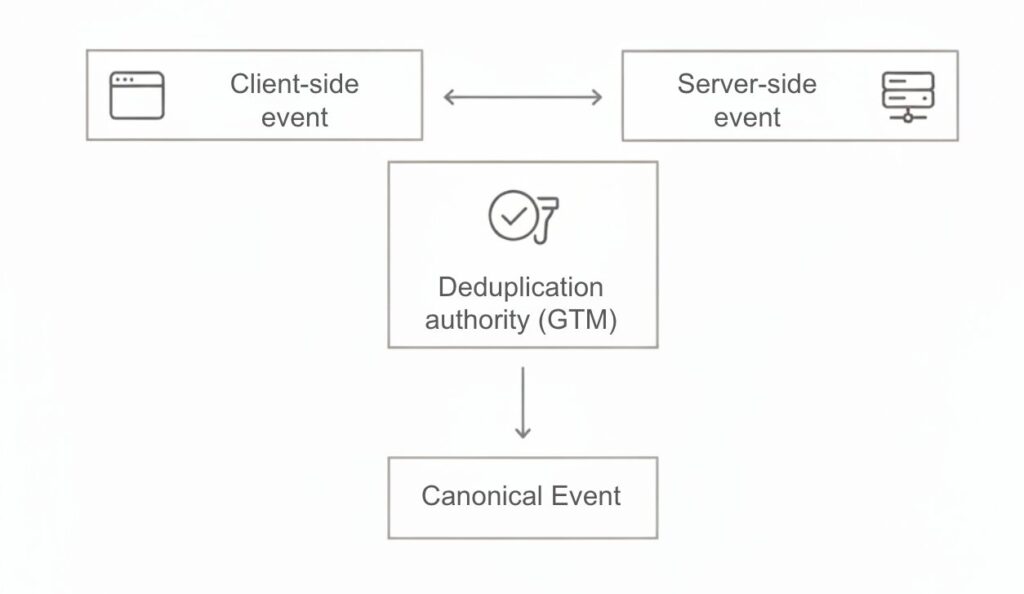

4. Implement Deduplication as a Design Choice

When both client-side and server-side events are present, duplication is not a risk, it is a certainty.

The professional question is not whether duplication exists, but where it is resolved.

Event IDs must be consistent. One system must be designated as the deduplication authority. Teams must accept that not all platforms deduplicate identically and that perfect convergence is neither realistic nor required.

This decision should be documented as part of the measurement architecture, not left to trial and error.

5. Reset Reporting Expectations

Finally, reporting maturity requires expectation management.

GA4 introduces processing delays. Media platforms apply modelling and attribution logic. Same-day alignment is rarely a meaningful benchmark.

Professionally run organisations report with:

- Defined lag windows

- Clear attribution scopes

- Explicit caveats on modeled data

This shifts the conversation from “why don’t these numbers match?” to “what decision is this data fit to support?”

The dashboard didn’t fail that morning.

What failed was the assumption that all data systems observe reality in the same way, at the same time, for the same purpose.

Once that assumption is removed, the work becomes clearer. Measurement becomes design. Discrepancies become explainable. Confidence returns.

That’s the moment a marketer stops trusting the dashboard and starts trusting the system behind it.