Anna didn’t wake up one morning thinking about AI consent.

She was just browsing.

A pair of shoes she had looked at last week appeared again. The homepage rearranged itself slightly. The product recommendations felt… accurate. Helpful, even. At first.

Then something shifted!

She noticed ads following her across sites she’d never shared data with. A recommendation referenced something she had only searched once, late at night, on her phone. She paused…… not because the technology was impressive, but because she wasn’t sure when she had agreed to this.

Anna didn’t feel angry. She felt unsure.

And that feeling… quiet uncertainty, is where the conversation about AI consent and personalization best practices really begins.

From Anna’s point of view, consent is not a legal concept. It’s emotional.

She remembers clicking a cookie banner. She remembers it being fast. She doesn’t remember reading it. What she does remember is expecting the website to work and nothing more.

What Anna didn’t realize was that behind the scenes, AI systems might be:

- Learning her browsing patterns

- Predicting future purchases

- Grouping her into behavioral segments

- Feeding those insights into personalization and advertising models

None of that was clearly explained to her in a way she could understand at the moment of consent.

And that’s the first disconnect.

Most users like Anna don’t mind personalization. They mind surprise.

AI consent breaks down not when data is used, but when the outcome feels bigger than the agreement. When personalization starts to feel like surveillance, trust erodes, even if the data use is technically lawful.

For Anna, good consent would have meant:

- Knowing what kind of personalization was happening

- Understanding whether AI was learning over time

- Having an easy way to change her mind later

She didn’t want complexity. She wanted honesty.

Where AI Changes the Consent Conversation

Traditional consent assumed something static.

AI is not static.

AI systems adapt, infer, and evolve. The data Anna provided yesterday might generate new insights tomorrow, insights she never explicitly agreed to.

This is why AI consent feels different from cookie consent.

From Anna’s perspective:

- Consent feels one-time

- AI behavior feels ongoing

That mismatch is where discomfort starts.

When AI is involved, consent can’t just explain data collection. It must explain data behavior.

Not in technical terms. In human ones.

The Marketer’s Perspective: “We’re Not Trying to Be Creepy”

From the marketer’s side, the story looks very different.

AI tools promise better relevance, less waste, and more meaningful experiences. Personalization is positioned as a win-win:

- Users see content they care about

- Brands improve engagement and conversion

But marketers face a tension.

They rely on AI models that:

- Combine multiple signals

- Create probabilistic predictions

- Often operate as “black boxes”

The marketer may not always know exactly how an insight was generated only that it performs well.

Here’s the uncomfortable truth:

Just because personalization works doesn’t mean it feels fair.

Best-practice marketers are starting to realize that consent is not a blocker it’s a filter.

When consent is clear:

- Data quality improves

- Trust increases

- Opt-in users are more engaged

- Long-term performance stabilizes

From this perspective, AI consent isn’t about limiting capability. It’s about aligning expectations.

Good personalization doesn’t start with algorithms.

It starts with an agreement the customer would still recognize weeks later.

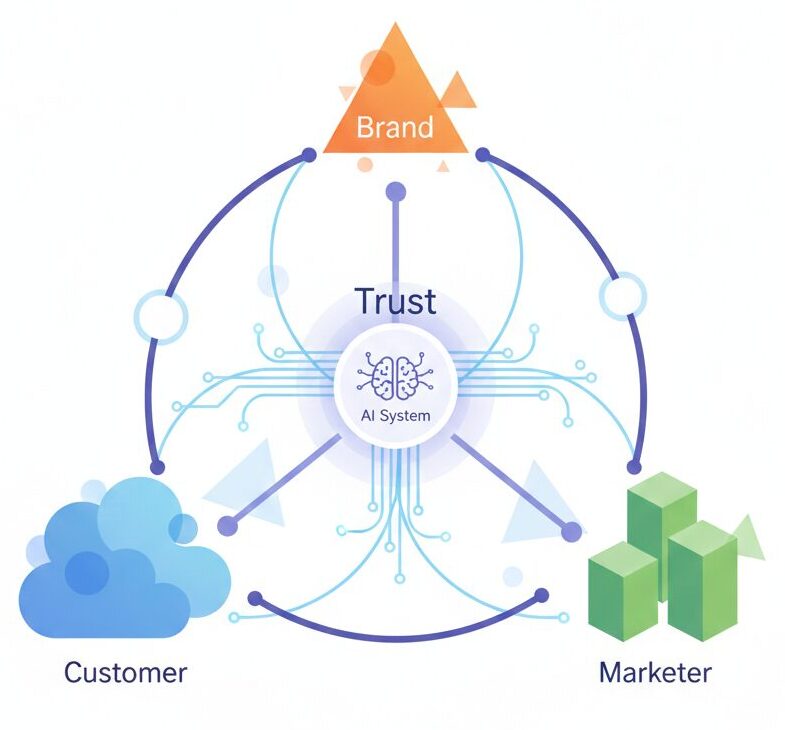

The Brand & Legal Perspective: “Trust Is a Business Asset”

For brand and legal teams, Anna’s discomfort represents risk but also opportunity.

AI consent sits at the intersection of:

- Privacy law

- Consumer trust

- Brand reputation

- Long-term sustainability

Regulations like GDPR emphasize that consent must be informed and specific. But AI challenges that standard by introducing secondary effects, new inferences created after the fact.

The question legal teams increasingly ask is not:

Is this allowed?

But:

“Would a reasonable user expect this?”

Brands that treat consent as a design problem, not just a legal one tend to make better decisions.

They document:

- What AI systems do today

- What they might reasonably evolve to do

- How users can understand and control that journey

From a brand standpoint, transparency becomes a differentiator. Not everything needs consent but everything needs explanation.

What AI Consent Best Practices Actually Look Like

From Anna’s story, a few principles become clear.

1. Explain Outcomes, Not Just Inputs

Instead of listing data types, explain what users will experience.

Example:

“We use AI to recommend products based on what you view and buy.”

Not:

“We process behavioral data for optimization.”

2. Separate Functionality From Optimization

Users expect the site to work. They don’t automatically expect learning systems.

Make that distinction visible.

3. Make Consent Reversible

AI systems learn over time. Consent should be just as flexible.

Changing your mind should not feel like punishment.

4. Avoid “Silent Learning”

If AI meaningfully changes how content, pricing, or ads behave, users should be aware, even if consent was technically collected earlier.

If Anna revisited the site a month later and understood:

- Why recommendations looked the way they did

- What AI was doing behind the scenes

- How to adjust her preferences

She wouldn’t feel watched.

She’d feel respected.

That’s the difference between compliant personalization and trusted personalization.

The Bottom Line

AI consent isn’t about slowing innovation.

It’s about keeping the human in the loop.

When customers like Anna understand what’s happening, marketers gain better data, brands build resilience, and legal teams stop playing defense.

Personalization works best when consent feels like a conversation not a trapdoor. Read more here about consent in AI application.